‘Eyes wide open’: The importance of responsible AI innovation

AI – artificial intelligence – has become part of our everyday language. What had been the preserve of sci-fi movies and tech afficionados has since been woven into our daily discourse. And from healthcare solutions to productivity, to creativity, it’s becoming part of our lives.

But with such fast-paced acceleration, the responsible development and deployment of AI has become paramount. Research reveals that more than 60 percent of the British public supports laws and regulations to guide the use of AI. In a national survey of over 4,000 adults in Britain, people were asked about their awareness of, experience with, and attitudes toward different uses of AI.

Conducted by the Ada Lovelace Institute and The Alan Turing Institute, the research shows attitudes to AI are complex and nuanced across different contexts. The public sees clear benefits for many uses of AI, particularly in health, science, and security.

Concerns exist, however, regarding the reliance on AI for sectors such as recruitment, where workplaces may rely on technology, bypassing human professional judgement. Respondents also expressed concern about advanced robotics, with 72 percent unsure about driverless cars.

The research comes as techUK, the UK’s technology trade association, held its second annual Tech Policy Leadership Conference in London. Amongst the speakers on the agenda were the UK’s tech minister Paul Scully, and Microsoft President Brad Smith, who gave their respective views on how to ensure responsible AI innovation.

A warning against dystopian narratives

Acknowledging the sense of urgency to deliver on regulation in a rapidly advancing digital age, tech minister Paul Scully said: “Good regulations help give confidence and helps develop markets.” Referencing the government’s white paper on AI regulation, which proposes a pro-innovation regulatory framework, Scully added: “AI has been moving at a rate of knots, and governmentally we need to work at speed to deliver on the 18-point framework we set out.”

UK government tech minister, Paul Scully, onstage at the techUK event in London

Recognising concerns about AI, Scully warned against overly negative narratives. “There is a dystopian point of view that we can follow here. There’s also a utopian point of view. Both can be possible.”

The risk of only focusing on the dystopian part, he added, would mean “missing out on all the good that AI is already functioning – how it’s mapping proteins to help us with medical research, how it’s helping climate change. It’s already doing all those things and will only get better at doing them.”

‘Eyes wide open’

Also attending the event was Microsoft President Brad Smith. He hailed what is an exciting time but cautioned, “We need to go into this new AI era with our eyes wide open. There are real risks and problems that we need to figure out how to solve.”

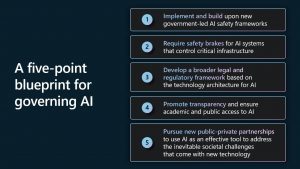

Smith reaffirmed Microsoft’s commitment to ethical AI innovation, underlining the company’s latest five-point blueprint for AI policy, law, and regulation, which addresses several current and emerging AI issues.

In such a fast-moving space, setting priorities is key and for Smith it starts with safety. “It’s vital that the technology is safe and remains subject to human control, that we have the ability to slow it down or turn it off if it’s not functioning the way we want.”

A call for safety reviews and licencing

Speaking candidly, Smith said: “I don’t think any country should be willing to completely put its trust in a set of companies. This technology is so powerful it can do so much good, and yet it also raises so many issues that it needs to be subject to the rule of law.”

“The companies that build and operate these systems are going to need to step up,” he said. “Microsoft is certainly included, and we’ve been working for six years to build the processes, the practises to train the personnel, to have the governance system inside Microsoft, all to ensure that AI is used responsibly.”

It’s notable that the applications that utilise AI are at the top layer of the technology stack. Existing laws and regulations already govern many industries, such as financial services, and AI applications should comply with them.

Microsoft President Brad Smith gives a keynote at the techUK event

“Where the conversation is really going is below the applications layer to the models themselves,” said Smith. “There will likely need to be some evolution, and perhaps even new laws that will impact the applications.” As with transportation modes like airplanes or automobiles, Smith suggests that powerful AI models may require a safety review and a licensing process. He called for a decision-making authority for licensing to be established, adding that “in Microsoft’s view, there should be a licence.”

Striking a balance

The need for regulation is crucial, but as Smith acknowledged in his keynote, it’s going to require a balance. “If regulation is too heavy, then innovation will proceed too slowly. If innovation goes too quickly, without sufficient regulation, then we could put safety at risk. We need to think this through, and we all need to talk it through together.”

Collaboration and international cooperation are also key. Smith called for countries to create a unified international AI research resource. “We’re far better off getting on the same page about what should be published, but more than that, creating a regulatory model that fits together with interoperable regulation can help avoid confusion.”

Recognising the UK as a key player, Smith said: “The UK is an intellectual powerhouse. We want to make sure that the entire economy has access to the use of these resources and ensure that the research in the great universities here continues.”

Smith concluded with an optimistic look to the future. “I think we should be excited about the promise of this new technology. We should have our eyes wide open about the challenges it will create. We should ask companies to step up. We should ask governments to go faster, but more than anything, we should ask all of ourselves and everyone else to find new ways to work together, because we’ll need to work together across borders. When AI reflects the world, it can truly serve the world.”